AI hallucination: Why it happens and how to prevent it in the age of agentic AI

AI hallucination occurs when an artificial intelligence system generates a response that sounds plausible — and even confident — but is factually incorrect, misleading or entirely fabricated.

As organizations deploy generative AI tools more broadly across customer experience (CX), these hallucinations could result in inaccurate answers to customer questions or flawed conversation summaries. This problem compounds with the use of agentic AI where systems act autonomously across journeys. AI hallucinations can disrupt service, damage trust and create real business risk.

Detecting and managing hallucinations is critical to ensure reliability, maintain customer trust and support agent decision-making. Organizations can reduce hallucinations by using responsible AI design that prioritizes accuracy, governance and transparency.

What is AI hallucination?

Hallucinations can occur across formats, including outputs from text generators, voice interactions and even generated images.

These errors reduce system reliability and weaken user trust. Over time, repeated inaccuracies make users and customers question whether that AI tool, or AI in general, can be trusted — and also the company that chose to deploy it.

In customer experience, hallucinations can mislead both customers and employees. A virtual agent, sometimes referred to as an AI chatbot, might provide the wrong policy details, or an internal AI assistant might summarize customer history incorrectly. As AI systems gain autonomy, the decisions they make and the data shaping them carry real regulatory and reputational consequences.

Understanding why AI hallucinates is the first step to preventing it.

Why accuracy matters for CX leaders

Accuracy and consistency form the backbone of customer trust. When AI delivers incorrect or biased information, customers lose confidence in the brand, not just the technology.

Reliable AI interactions support loyalty and protect reputation. Customers value honesty and consistency, especially when they are asking for help or making important decisions. For CX leaders, AI enables and scales those interactions.

Accuracy also enables efficiency and empathy. When AI responses are accurate, agents spend less time fixing errors and more time being present and empathetic with customers. This balance enables service teams to operate at scale without sacrificing care.

Preventing hallucinations produced by AI systems is about more than reducing risk. It is about delivering dependable experiences that customers remember for the right reasons.

Why does AI hallucinate? Core causes

AI hallucinations are not merely random glitches. They usually stem from identifiable factors in large language model (LLM) design, training data, retrieval and prompting. With the right safeguards for hallucinations, AI-powered enterprises can significantly reduce and manage them.

Data gaps and bias

AI relies on data to make predictions and generate responses. When training data is incomplete, outdated or biased, the system fills gaps with assumptions.

Most AI models are trained on time-bound data sets and designed to make predictions based on patterns gleaned from that data. AI’s effectiveness is compromised when confronted with newer information outside that training.

Lacking data or context, its probability-based approach leads it to fill in gaps and potentially see faulty patterns and fabricate information. Poor data quality leads directly to unreliable outputs. If an AI system has never seen a specific scenario, it may invent a response that sounds reasonable but is wrong.

Another problem with AI output is bias that is rooted in the data it is trained on, or human biases injected into the data set. For example, some data sets may represent only certain geographies or a timeframe that does not include certain groups or specific roles.

In a contact center, that can lead to unfair or inconsistent treatment of customers, such as reinforcing stereotypes, misinterpreting intent and offering different levels of service.

Data gaps and bias are inevitable, but they are recognizable and traceable to the source. Mitigating them is essential to building ethical, inclusive AI systems that deliver fair and trustworthy customer experiences.

Overfitting and context loss

Overfitting occurs when AI models learn patterns too narrowly. They perform well in familiar scenarios but fail when conditions change.

Context loss is common in CX environments where conversations span channels. A customer may start in chat, move to voice and then follow up by email. If context does not carry across tools, the AI loses track of intent.

Fragmented systems increase these risks. When customer data lives in disconnected tools, AI lacks a complete view of context.

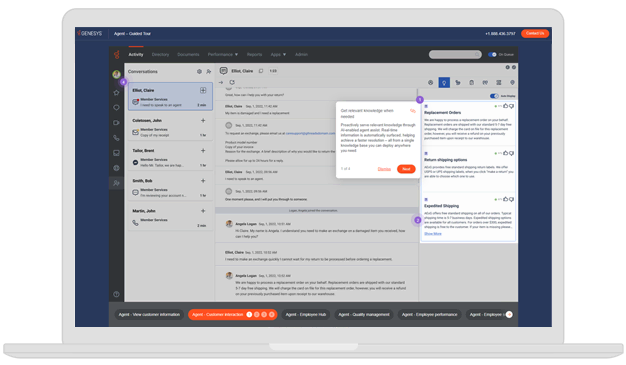

Orchestration platforms maintain continuity by preserving conversation history and situational context. For example, a contact center AI that remembers a prior refund request won’t repeat questions or offer incorrect information.

Prompt ambiguity and intent confusion

Unclear or incomplete instructions force AI to guess. When prompts lack detail, the system fills gaps with plausible but false information. In CX, intent confusion can lead to misrouted interactions or inaccurate responses, such as sending a billing question to technical support.

This behavior explains many AI hallucination examples. The model is not lying; it is trying to identify patterns and predict the next action. If the picture isn’t complete, the anticipated outcome might not fit the intent.

Writing specific prompts and using intent recognition models reduces this risk in several ways:

- Focus on sentiment analysis and intent recognition to decipher the meaning behind a customer’s words.

- Guide callers with context-aware prompts, direct them to the right channel or agent based on their needs, minimizing transfers and repetition.

- Surface relevant knowledge from connected knowledge bases and customize agent scripts so every support interaction feels tailored, even at enterprise scale.

Lack of governance and guardrails

Without oversight, hallucinations go unchecked. Errors may seem minor at first but can scale quickly across thousands of interactions.

Governance refers to the rules, monitoring and review systems that guide AI outputs. Built-in guardrails and live oversight catch errors early and prevent the spread of misinformation.

Strong governance ensures that small mistakes do not become systemic failures.

Without foundational, diligent AI governance and guardrails, an enterprise not only limits the speed and scalability of its AI efforts, but also exposes the business to operational risk and reputational damage.

AI hallucination examples in real-world applications

Hallucinations are not theoretical. They already affect industries that depend on accuracy and trust.

Customer support miscommunication

A virtual agent tells a customer that an order has shipped when it has not. The AI invents a refund policy that does not exist. The result is confusion, frustration and loss of trust.

Built-in AI reduces this risk by verifying order status and policies against real-time systems before responding.

Incorrect financial summary

An AI assistant misreads an account balance or summarizes a financial report incorrectly. Even small inaccuracies can create compliance headaches and erode confidence.

AI with contextual verification prevents these mistakes by grounding responses in validated data sources.

Policy recommendation error

An insurance AI recommends the wrong coverage tier based on limited data or poor validation. The customer receives advice that does not match their needs.

Integrated systems avoid these inconsistencies by connecting customer profiles, policy rules and eligibility data in one unified view.

How responsible AI design prevents hallucination

Reducing hallucinations requires intentional design choices, not reactive fixes. This spans the architectural underpinning, governance by design and ability to incorporate real-time data.

Built-in AI architecture

AI that is native to a single platform operates within the same data environment across channels, journeys and touchpoints. That unified context helps reduce the errors that often arise when bolt-on tools rely on fragmented or incomplete information.

When guardrails, governance and compliance are built into the architecture from the start, businesses gain control and avoid the complexity of adding those protections later.

When evaluating platforms, prioritize native AI capabilities that run within the same system and data environment as the rest of the customer experience.

Governance by design

Governance defines how AI should behave before it is deployed. This includes predefined rules, monitoring, audit trails and policy compliance checks. Governance keeps AI decisions explainable and safe, especially in regulated environments.

It’s important enough to repeat: Governance must be designed and embedded from the start, not added later.

Real-time data context

Accurate AI depends on continuous access to live data, often supported by techniques like retrieval augmented generation (RAG) that ground responses in verified, up-to-date information. Static snapshots quickly become outdated.

Experience orchestration maintains real-time context across channels. Unified platforms that connect to live data streaming and analytics enable AI to respond based on what is happening now, not what happened yesterday.

This real-time visibility helps reduce outdated or incomplete responses and minimizes the blind spots and delays created by siloed systems.

Human oversight and transparency

Humans play an essential role in responsible AI. By handling routine, data-heavy or time-sensitive tasks, AI frees people to focus on work that requires judgment, creativity and empathy.

Human-in-the-loop processes help teams review outputs, manage exceptions and improve performance over time. Built-in safeguards can also escalate requests that exceed the AI’s limits to human agents, while enabling supervisors to intervene when human judgment matters most.

Transparency reinforces trust by making AI decisions more understandable and accountable. CX leaders need clear visibility into how models are trained, how data is sourced and secured, and how bias is monitored and mitigated.

Minimizing AI hallucinations with built-in trust

Responsible, accurate AI is essential as agentic AI takes on more autonomous roles.

Data gaps, context loss, ambiguous prompts and weak governance lead to AI hallucinations, which can weaken system reliability, undermine customer trust and trigger regulatory and reputational consequences.

Fortunately, each of those causes has a clear solution rooted in integrated design and oversight.

Choose a cloud-based platform that offers unified data, built-in AI and transparent governance. This approach protects accuracy while enabling innovation at scale.

Learn how the Genesys Cloud™ platform helps drive accuracy and empathy at scale.