Coordinating collaborative AI agents for customer experience

Enterprises use LLM agent orchestration to connect customer-facing and back-office AI agents into a unified experience. One agent might handle conversation context while another manages data retrieval or next-best action. This orchestration ensures seamless transitions and faster, more personalized resolutions across channels.

Automating multi-step service workflows

Organizations can design orchestration patterns that let LLM agents complete multi-step processes autonomously. For example, an orchestrated AI system can verify identity, retrieve account data, summarize customer history and draft a personalized response — all before human review. This delivers speed and compliance while reducing manual effort.

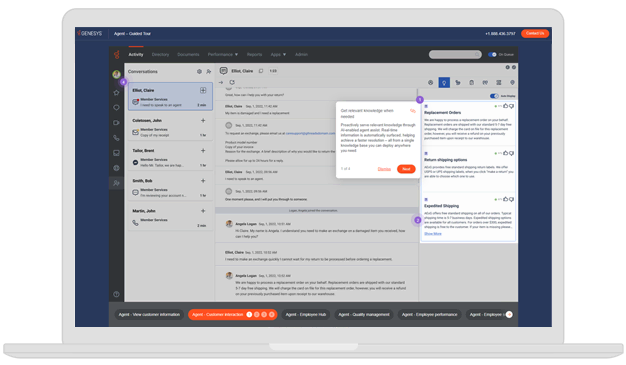

Enhancing knowledge management and insights

With orchestrated agents, enterprises can centralize and govern AI-driven insights. LLM agents collaborate to search internal knowledge bases, summarize policies and recommend improvements. Orchestration ensures each agent’s output is validated, traceable and aligned with brand and regulatory standards.

Powering dynamic workforce assistance

Within workforce engagement, orchestrated LLM agents can act as copilots for scheduling, coaching and analytics. By exchanging data in real time, these agents help supervisors predict staffing needs, assess performance trends and guide agents toward better customer outcomes — all within the same orchestration layer.

Scaling responsible AI across systems

Through orchestration, enterprises can control how LLM agents interact with sensitive data and third-party tools. Guardrails and governance policies embedded in the agent orchestration platform maintain security, transparency and ethical use — critical for regulated industries.