Your Genesys Blog Subscription has been confirmed!

Please add genesys@email.genesys.com to your safe sender list to ensure you receive the weekly blog notifications.

Subscribe to our free newsletter and get blog updates in your inbox

Don't Show This Again.

In 2022, it was estimated that conversational AI would reduce labour costs by $80 billion. When ChatGPT became available, the focus shifted to generative AI — and that technology continues to dominate the conversations among customer experience (CX) professionals. The promise of easy access to information for customers, delivered naturally and with personality, is an attractive proposition. It’s especially attractive to those who have been working with conversational AI. Some might question whether continuing conversational AI investments make sense in this generative AI future.

Ringing the death knell for conversational AI seems premature. Gartner reported in 2023 that conversational AI is the fastest growing segment for the contact centre. In the same study, the firm found that interactions that involve AI are augmented, not fully offloaded. This means there’s a need for the human touch to complete the experience.

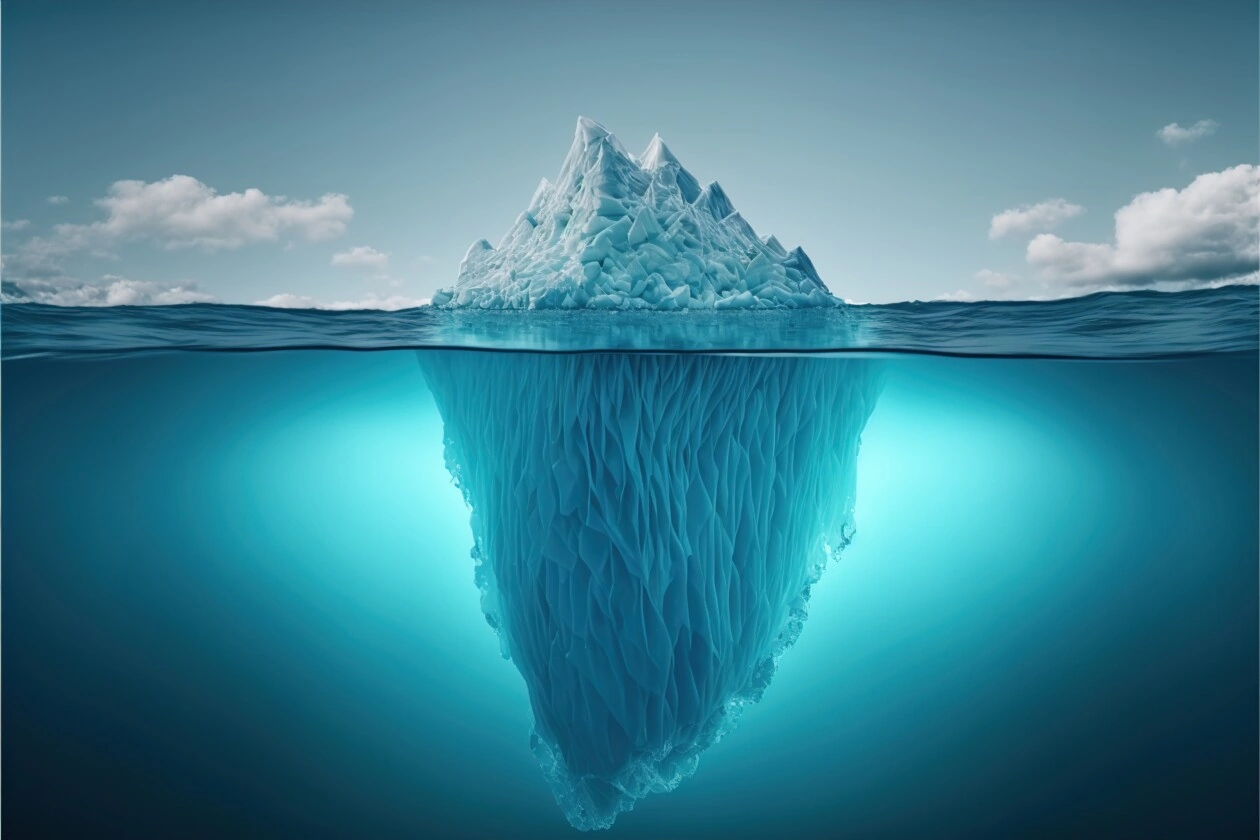

Clearly, we’re not yet ready to replace what we’ve been working with — and there’s room for generative and conversational AI to coexist. While generative AI has the promise to improve how customer experience is delivered, there is still untapped potential in how conversational AI is applied.

The difference between the application of generative AI and conversational AI for customer experience lies in the nature of the conversation that happens between the consumer and their product/service provider. With generative AI, the conversation is informational, fluid and might cover a broad set of topics. It will feel like you’re talking to a friend who can tell you about your destination with interesting details and can give you various options on how to reach the destination. However, this friend can’t just drive you there or call you a cab.

With conversational AI, the conversation will be more confined, potentially more robotic, and it could be digital or voice-based. IVRs that used to require the “Press 1 for Yes, 2 for No” now incorporate conversational AI to enable natural language conversations, sometimes augmented with DTMF (dual tone multi-frequency), which enables the use of the dial pad for sending information to a system.

The conversion will be focused. These conversations are built to approximate human service interactions and typically end with an action — a purchase, a return, an account reset or an escalation.

There are also differences for those who are building conversational experiences into interaction journeys. With generative AI, prompt engineering coupled with a pre-trained large language model can enable an automatic conversation. Prompt engineering means finding a way of extracting the right data from a model that has been trained on a very wide set of data gathered from virtually anywhere and anything. It’s asking AI the right question with the right parameters to give it the right frame of reference to retrieve the right information. It’s both art and science.

Conversational AI bots are designed as conversational flows with defined paths based on intents. Conversational AI bots are programmed to respond to certain questions within a specific domain. Asking conversational AI a question that doesn’t match what it knows will result in a response such as, “Please repeat your question.”

With generative AI, that same situation is unlikely. Generative AI models are typically trained to provide some type of answer, because the data set that was used for the training is much bigger. Generative AI algorithms calculate what is the next best thing to say based on learned language patterns. Some Generative AI models will provide wrong answers that sound right – a hallucination.

Conversational AI is used to take the customer request and extract an intent – the essence of the ask. The conversational design will determine what will happen once an intent is identified. Conversational designers can build in a data look-up (i.e., show me my account balance), or craft an answer based on a knowledge base.

A conversational AI model has a very narrow focus (typically) — so it can respond to questions within that one domain. Generative AI models are bigger; they have billions of parameters well above typical conversational AI models — so they can understand a larger scope of language. It’s like responding to an essay question about a book after reading a single page (or chapter) versus responding to an essay question after reading the entire book, the author’s biography, the history behind the book and other reviews of the book.

The problem with shifting attention fully to Generative AI is that the ability to deploy a bot that can have a fun conversation about a multitude of topics won’t solve the problems that real customers bring to the contact centre. A more fluid conversation won’t overcome issues that customers have experienced through use of chatbots.

Data shows that of those who have used chatbots:

The issue with bots isn’t how fluid and fun the conversation with the bot can be. A consumer that reaches out for help is rarely in the mood to chit chat, though they might be using ChatGPT to write a strongly worded email expressing their frustration with a product or a service after the service bot failed to help them resolve an issue in a timely manner.

The goal of a customer reaching out to a brand looking for service or support isn’t to have a chat; they want to get something resolved. This can be time sensitive. For example, this could be because a customer’s loan hasn’t been approved and they’re supposed to close on their house in the next week. In this instance, they need more than a discussion about how mortgages work and how interest rates are calculated.

Another example is when a patient calls their health care provider because they aren’t feeling well and need to schedule an appointment. They don’t want to learn more about their condition or other conditions to try and figure out what’s wrong on their own (that rarely leads to a good health outcome). It could also be a situation in which a domestic abuse victim is looking for help — and the more time they spend typing and conversing, the more likely they are to be in grave danger.

These are all situations that our customers have faced where conversational AI proved an invaluable solution.

In a recent webinar, David Myron, Principal Analyst, Customer Engagement at Omdia shared the do’s and don’ts for AI success from “The State of Digital CX 2023” report. While he outlined multiple statistics and insights, one is critical to understanding whether implementing AI to automate conversation will succeed:

It’s not about the conversation; it’s about the outcome. In our work with customers, we’ve found that 85% of the work isn’t in training the bot to speak but in making sure it’s integrated into the flow, integrated with the right data and integrated into analytics.

Mitch Mason, the Principal Product Manager for Conversational AI, covers all these topics in the latest Tech Talks in 20 “Best practices for using chatbots to enhance the customer journey.” He also illustrates how this applies in the real world.

To key to getting the most out of conversational AI lies in:

Learn more about Genesys AI in the recent Tech Talks in 20 podcast on how to use chatbots to improve the customer journey.

Subscribe to our free newsletter and get the Genesys blog updates in your inbox.